Text-to-Speech (TTS) was ignored for 40 years because the technology worked well enough for its narrow use cases. Neural generation did not incrementally improve synthetic speech but transformed voice synthesis into something fundamentally different. WaveNet reduced the gap between synthetic and human speech by over 50% in 2016, turning accessibility software into AI infrastructure.

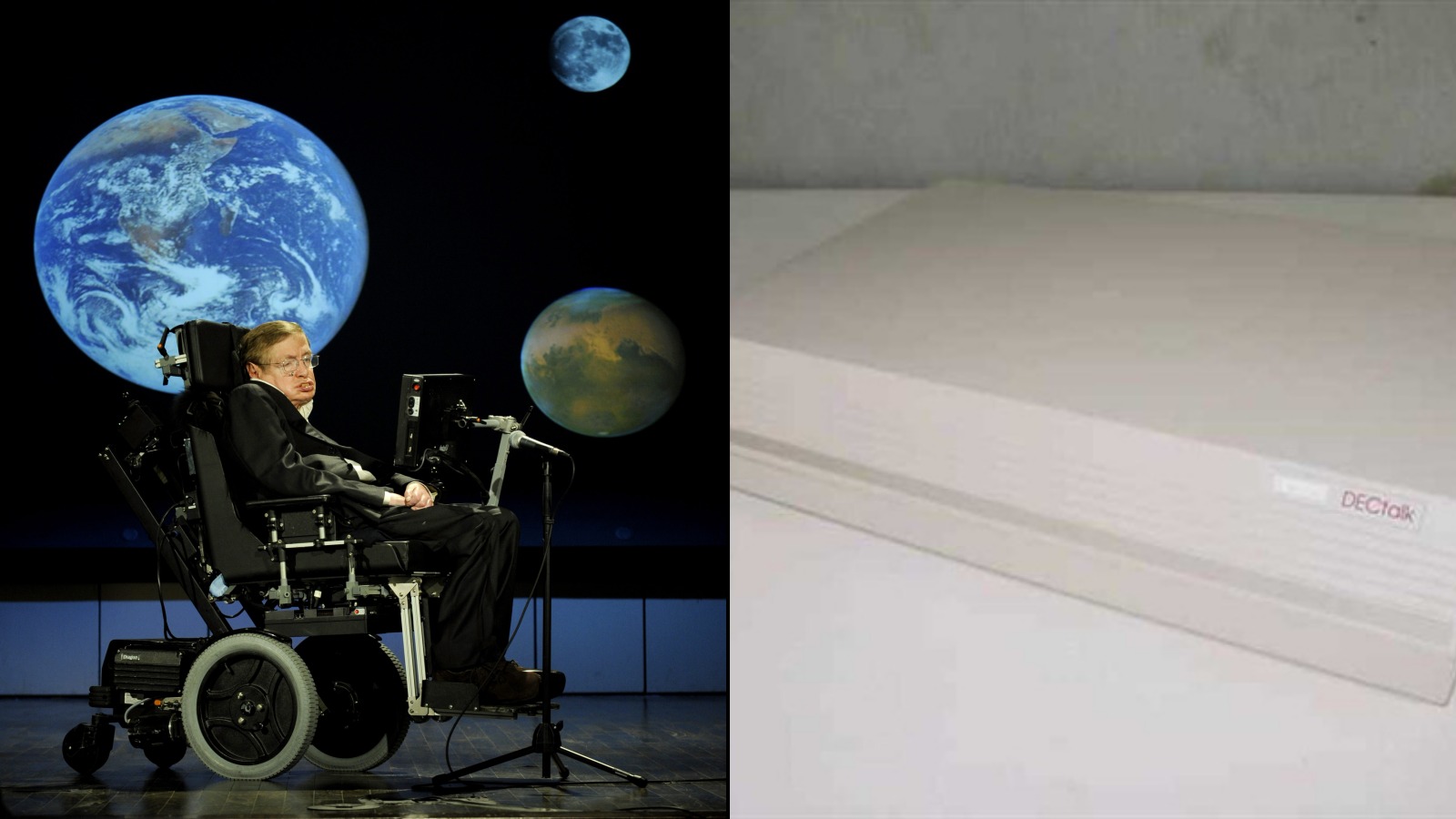

Stephen Hawking’s refusal to upgrade his 1986 voice for 32 years captures the pre-neural reality: mechanical speech was acceptable precisely because expectations were so low.

The Pre-Neural Era: When ‘Good Enough’ Defined TTS

For nearly five decades, TTS technology remained fundamentally unchanged. The approaches evolved incrementally, but the core limitation persisted: machines assembled speech from parts rather than generating it. That was enough, because the use cases demanded intelligibility, not naturalness.

Concatenative synthesis cut voice recordings into phonetic fragments and recombined them. Parametric vocoders used mathematical models to generate audio waveforms. Both methods produced speech that, as researchers noted, “often sounded mechanical and contained artifacts such as glitches, buzzes and whistles.” A mechanical voice reading a phone menu or announcing a train departure did not need to sound human. It needed to be intelligible, reliable, and available in multiple languages.

The research origins (1968-1983)

The first complete English TTS system emerged in 1968, built by Noriko Umeda in Japan.1 A decade later, Dennis Klatt at MIT developed KlattTalk, a parametric synthesizer that formed the foundation for decades of synthesis technology. His approach accepted mechanical imperfection as the price of utility. In 1983, DECtalk launched as a $4,000 standalone unit, bringing Klatt’s research into a commercial product.2

Stephen Hawking and the voice that refused to change (1986)

Hawking adopted the Perfect Paul voice from DECtalk in 1986 and refused all upgrades for the next 32 years, preferring the familiar mechanical cadence over improved naturalness.3 His choice captures the pre-neural reality: synthetic speech was acceptable precisely because expectations were so low. The voice became so iconic that it outlived the technology that created it.

Hear the difference: 1986 vs 2026

Both clips say the same sentence: “This was the future of voice. VoiceRadar covers what came after.”

Transcript

This was the future of voice. VoiceRadar covers what came after.

This audio was generated with Fred, a formant synthesizer shipped with every Mac since the 1980s. Same lineage as DECtalk: no recorded voice, no neural network, pure mathematical modeling of the human vocal tract. Apple still bundles it with macOS in 2026. It still sounds exactly like this.

Transcript

This was the future of voice. VoiceRadar covers what came after.

This audio was generated with ElevenLabs Titan, a neural TTS model using deep learning to produce speech from text. The same sentence, 40 years of technology apart. The gap between these two clips is the story this article tells.

The commercial boom (1999-2003)

What changed at the turn of the millennium was not the technology but its context. Computers, mobile phones, GPS devices, self-service kiosks, and ATMs spread into every corner of daily life. Machines needed to talk, and a commercial industry emerged to make that happen.

ReadSpeaker was founded in 1999, pioneering web-based TTS to make online content accessible. Loquendo spun out of Telecom Italia Lab in 2000 with commercial TTS that included emotion editing tools. Nuance Communications merged with ScanSoft in 2001 and began consolidating the speech market, eventually dominating enterprise telephony and IVR. In 2003, Acapela Group formed from three European specialists: Babel Technologies (Belgium), Infovox (Sweden), and Elan Speech (France).

These companies powered an entire ecosystem of production applications. Interactive Voice Response (IVR) systems handled millions of phone calls daily. GPS devices spoke street names no human had recorded. Train stations, airports, and factories relied on TTS for public announcements and safety alerts. Government mandates like Section 508 in the US created sustained institutional demand.

| Founded | Company | Focus | Technology |

|---|---|---|---|

| 1994 | ScanSoft (later Nuance) | Enterprise telephony, IVR | Concatenative |

| 1999 | ReadSpeaker | Web accessibility, e-learning | Concatenative |

| 2000 | Loquendo | Multilingual TTS, emotion synthesis | Parametric + concatenative |

| 2001 | Cepstral | Lightweight embedded TTS | Parametric (Flite) |

| 2003 | Acapela Group | Multilingual voices, assistive tech | Concatenative |

| 2004 | IVONA (now Amazon Polly) | Consumer TTS, e-readers | Concatenative + Hidden Markov Model |

Major TTS companies before the neural era

The consolidation and the consumer shift (2011-2014)

Nuance acquired Loquendo for 53 million euros in 2011, absorbing one of Europe’s most advanced synthesis engines. The deal gave Nuance access to Loquendo’s multilingual voice portfolio spanning over 30 languages, strengthening its dominance in the European enterprise and telecom markets. In 2013, Amazon acquired Polish TTS company IVONA Software, which later became the foundation for Amazon Polly, bringing high-quality speech synthesis directly into the AWS ecosystem. Then in 2014, Amazon launched Alexa and the Echo, and TTS moved from enterprise infrastructure into consumer homes for the first time at scale. Within two years, over 5 million Echo devices had been sold in the US alone (Business Insider, January 2016).

The Neural Revolution: WaveNet and the End of Concatenation

WaveNet represented the first AI model to generate natural-sounding speech by directly modeling raw audio waveforms, sample by sample. The breakthrough was not just technical but conceptual: instead of assembling pre-recorded fragments, the system learned the statistical patterns that make speech sound human.

The performance gap closed dramatically. WaveNet reduced the difference between synthetic and human speech by more than 50% according to subjective listener evaluations.4 This was not incremental improvement but a fundamental shift in what synthetic speech could achieve. The system used deep convolutional neural networks to predict each audio sample based on all previous samples, learning patterns invisible to traditional parametric approaches.

Initial implementations faced severe computational constraints. Early WaveNet required hours to generate one second of audio, making real-time synthesis impossible.

Google’s engineering team solved this through parallel WaveNet, a distillation technique that achieved a 1,000x speedup by 2017.5 The optimized system could generate one second of speech in just 50 milliseconds, finally enabling practical deployment.

Tacotron Integration and Human Parity

Tacotron 2, released in December 2017, combined sequence-to-sequence modeling with WaveNet vocoding to achieve near-human performance metrics. The system scored 4.53 on Mean Opinion Score (MOS) evaluations, compared to 4.58 for professionally recorded human speech.6 MOS is the standard subjective quality metric for speech synthesis, where human listeners rate naturalness on a scale from 1 (completely unnatural) to 5 (indistinguishable from a real person). A gap of 0.05 points is within the range typically considered indistinguishable by trained listeners in controlled environments, though results vary across listening conditions and speaker familiarity (Shen et al., 2018). This represented a dramatic narrowing of the quality gap that had defined synthetic speech for decades, though perfect human parity across all contexts remained elusive.

The technical architecture separated text analysis from audio generation, allowing each component to specialize. Tacotron handled the linguistic complexity of converting text to mel-spectrograms, while WaveNet focused on generating natural-sounding audio from those spectrograms. This division of labor became the template for modern neural TTS systems.

The Market Explosion: TTS as Core Infrastructure

The global text-to-speech market was valued at between $3.6 billion and $4.3 billion in 2025, according to Business Research Insights and Expert Market Research.7 Industry analysts project growth rates of 12 to 15 percent annually through 2035. The expansion is driven by the transformation from assistive technology to core infrastructure. Neural TTS technology now dominates market share, while automotive applications lead growth as voice interfaces become standard in vehicles.

North America commands a significant portion of the global market, but Asia-Pacific represents the fastest-growing region as mobile-first markets adopt voice interfaces. The geographic distribution reflects both regulatory drivers and infrastructure maturity, with accessibility mandates like Section 508 in the US, WCAG compliance requirements, and the European Accessibility Act (EAA) creating sustained demand.

The turning point came when voice moved from enterprise tooling into consumer homes. Amazon launched the Echo in 2014, and within four years smart speaker adoption went from zero to 47 million users in the US alone (Voicebot.ai, 2022).8 Voice-enabled devices normalized the expectation that machines should speak naturally, not just intelligibly. Conversational AI agents, content automation, and in-car assistants now represent the primary volume drivers, and TTS sits at the core of all of them.

Enterprise adoption accelerated as API pricing reached practical thresholds. Where early TTS required expensive dedicated hardware, modern neural TTS operates as cloud infrastructure with usage-based pricing that scales from prototype to production without capital investment.

The Developer Landscape: APIs, Latency, and Trade-offs

Modern TTS APIs compete on four primary dimensions: latency, voice quality, language coverage, and voice cloning capabilities. The performance envelope has shifted from “acceptable” synthetic speech to real-time generation with sub-200 millisecond latency and near-human quality across multiple languages.

Latency defines real-time application viability. Leading providers now achieve sub-200ms time to first byte (TTFB), with some newer systems reaching sub-120ms P90 latency. These performance levels enable voice agents that respond within human conversation timing, eliminating the artificial pauses that marked earlier generations.

Pricing Architecture and Voice Selection

The pricing landscape illustrates how dramatically the market has fragmented. What once required a $4,000 hardware unit now costs as little as $4 per million characters on one end, or $120 to $200 per million on the other. That 30x to 50x price range within the same technology category reflects fundamentally different product strategies: commodity infrastructure versus premium voice quality.

| Provider | Price/1M chars | Free tier | Source |

|---|---|---|---|

| Amazon Polly Standard | $4 | 5M chars/12 months | aws.amazon.com/polly/pricing |

| Google Cloud Standard | $4 | 4M chars/month | cloud.google.com/text-to-speech/pricing |

| OpenAI TTS | $15 | None | platform.openai.com/pricing |

| Google Cloud WaveNet | $16 | 1M chars/month | cloud.google.com/text-to-speech/pricing |

| Azure AI Speech Neural | $16 | 500K chars/month | azure.microsoft.com/pricing |

| Amazon Polly Neural | $16 | 1M chars/12 months | aws.amazon.com/polly/pricing |

| OpenAI TTS HD | $30 | None | platform.openai.com/pricing |

| Deepgram Aura-2 | $30 | $200 credits | deepgram.com/pricing |

| Google Cloud Chirp 3 HD | $30 | None | cloud.google.com/text-to-speech/pricing |

| ElevenLabs | $120-$200 | 10K credits/month | elevenlabs.io/pricing |

TTS API pricing as of March 2026, per 1M characters

Voice selection became a competitive differentiator. While traditional systems offered a handful of voices, modern providers offer hundreds to thousands of options, enabling applications to match voices to specific use cases, demographics, or brand requirements.

Voice cloning emerged as the most technically demanding feature. As of 2025, systems require as little as 15 seconds of audio to create recognizable voice clones,9 with some providers claiming effective cloning from shorter samples. The technical capability exists, but deployment remains constrained by safety and ethical considerations.

Voice Cloning and the Ethics Frontier

Voice cloning represents both TTS technology’s greatest achievement and its most dangerous application. The technical barriers have collapsed while the societal frameworks for managing the consequences lag years behind deployment.

The fraud statistics reveal the scope of misuse. As of 2025, one in four adults have reported encountering AI voice scams (McAfee, 2023).10 Deepfake-related fraud has resulted in substantial losses. In January 2024, a finance employee at engineering firm Arup transferred approximately $25 million after joining a video call where the CFO and colleagues were all AI-generated deepfakes (CNN, May 2024).11

Technical capability outpaced deployment wisdom. While systems can clone voices from minimal audio samples, major providers have chosen restricted release strategies.

OpenAI developed Voice Engine in late 2022 but limited it to preview access rather than general availability, citing the need for society to adapt to synthetic voice capabilities before widespread deployment.

Legal and Technical Countermeasures

Regulatory frameworks are emerging but remain fragmented. The EU AI Act includes transparency requirements for synthetic media, while individual US states have enacted deepfake-specific legislation. California’s law creates criminal penalties for malicious deepfake creation, but enforcement across jurisdictions remains challenging.

Technical countermeasures focus on detection rather than prevention. Watermarking schemes embed inaudible signatures in synthetic audio, while detection algorithms attempt to identify synthetic speech characteristics. However, the adversarial nature of the problem means detection methods face constant pressure from improving generation techniques.

The Road Ahead: Conversational AI and Real-Time Voice Agents

The 2024-2025 period marked a breakthrough in orchestrated speech pipelines that combine speech-to-text, large language models, and text-to-speech for real-time conversational agents. This integration transformed TTS from a content generation tool into the output layer of interactive AI systems.

Speech-to-speech technology became commercially viable with systems like OpenAI’s Voice mode and Realtime API. These implementations eliminate the visible boundaries between speech recognition, language processing, and speech synthesis, creating fluid conversational experiences that approach human-to-human interaction quality.

The latest model generations introduce instruction-following capabilities for speech delivery. Developers can now specify not just what to say but how to say it: “talk like a sympathetic customer service agent” or “explain with enthusiasm.” This complements SSML markup, which already allowed fine-grained control over pronunciation, pauses, and emphasis at the text level. This control over delivery style represents another fundamental shift from mechanical reproduction to intelligent expression.

On-Device and Privacy-Preserving Synthesis

On-device TTS addresses privacy concerns while reducing latency and infrastructure costs. Systems like Neuphonic NeuTTS Air enable real-time synthesis without cloud dependencies, keeping sensitive text content local while maintaining quality comparable to cloud-based systems.

The technical challenge involves model compression without quality loss. Edge deployment requires models that fit within mobile device memory and compute constraints while delivering neural-quality output. Success in this area could eliminate the privacy trade-offs that currently constrain TTS deployment in sensitive applications.

Real-time multilingual translation integrated with TTS creates cross-language voice communication systems. Users can speak in their native language while listeners hear fluent speech in their preferred language, using the speaker’s cloned voice characteristics. This capability transforms international communication, education, and media consumption.

Conclusion

Neural TTS technology has transformed from a niche assistive tool into the voice layer of modern AI infrastructure, but this transformation remains incomplete. The technical capabilities now exceed human parity in controlled conditions, while deployment constraints stem from ethical rather than technical limitations. Organizations integrating TTS should prioritize providers with robust safety frameworks and voice authentication systems, as the technology’s misuse potential continues to outpace regulatory development.

- Helsinki University, "History of Speech Synthesis," 1999. research.spa.aalto.fi

- Computer History Museum, "How DECtalk Gave Voice to a Genius," 2019. computerhistory.org

- MIT Press Reader, "Stephen Hawking's Eternal Voice," 2024. thereader.mitpress.mit.edu

- DeepMind, "WaveNet: A Generative Model for Raw Audio," 2016. deepmind.google

- DeepMind, "WaveNet Research Breakthroughs," 2017. deepmind.google

- Google Research, "Tacotron 2: Generating Human-like Speech from Text," 2017. google.github.io

- Business Research Insights, "Text-To-Speech Market Trend, Size and Share," 2025. businessresearchinsights.com

- Voicebot.ai, "The Rise and Stall of the U.S. Smart Speaker Market," 2022. voicebot.ai

- OpenAI, "Navigating the Challenges of Synthetic Voices," 2024. openai.com

- McAfee, "The Artificial Imposter," 2023. mcafee.com

- CNN, "Arup revealed as victim of $25 million deepfake scam," 2024. cnn.com

- Expert Market Research, "Text-to-Speech Market Size and Industry Growth," 2025. expertmarketresearch.com

Helsinki University of Technology, “History of Speech Synthesis,” 1999. ↩︎

Computer History Museum, “How DECtalk Gave Voice to a Genius,” July 2019. ↩︎

MIT Press Reader, “Stephen Hawking’s Eternal Voice,” December 2024. ↩︎

DeepMind, “WaveNet: A Generative Model for Raw Audio,” September 2016. ↩︎

Google DeepMind, “WaveNet Research,” 2017. ↩︎

Google Research, “Tacotron 2,” December 2017. ↩︎

Business Research Insights / Expert Market Research, 2025. ↩︎

Voicebot.ai, “The Rise and Stall of the U.S. Smart Speaker Market,” March 2022. ↩︎

OpenAI, “Navigating the Challenges and Opportunities of Synthetic Voices,” March 2024. ↩︎

McAfee, “The Artificial Imposter,” 2023. ↩︎

CNN, “Arup revealed as victim of $25 million deepfake scam involving Hong Kong employee,” May 2024. ↩︎